Don't Feed Your Horse Petrol

Two builders walk into an AI conversation. One's building sales intelligence robots. One's vibe-coding autonomous agents. Both agree: you're probably feeding your horse petrol.

The Future of AI Belongs to Those Who Build Their Own Robots

A conversation between Govind Davis and Morten A. Evensen

Founders, Builders, and Reluctant Futurists

Govind Davis is a business content artist and AI builder based in the Greater Seattle Area.

Morten A. Evensen is an experienced IT sector leader based in Oslo, Norway, and co-founder of Brewra Ventures and Brewra AI.

The Horse Doesn't Run on Petrol

Imagine someone hands you the most disruptive technology in human history.

Your first instinct?

Plug it into whatever you were already doing. Inject it into the existing process. Patch it into the broken workflow. It's almost irresistible — and almost certainly wrong.

"If you're going to introduce AI into anything, just taking the existing workflow and injecting AI into it is like, at the advent of the automobile, starting to feed your horse petrol." — Morten Evensen

That's the analogy Morten Evensen dropped with the precision of a man who has spent the better part of a year unlearning everything he thought he knew about AI. And if you felt a flicker of recognition reading it — maybe even a little embarrassment — you're not alone. Most of the AI "transformation" happening right now is exactly this: petrol in the horse. New fuel. Same broken animal.

The conversation this article is drawn from is a live, unscripted exchange between Govind and Morten — two people who have been deep in the weeds building things with AI, not just theorizing about it.

What emerged wasn't a clean roadmap.

It was something messier and more interesting: a genuine intellectual collision between two frameworks for understanding what AI actually is, what it's about to become, and how you build for the world that's coming rather than the one that's leaving.

Act I: The Problem with LLM Wrappers (and Why They're Already Dead)

Let's start with what's obvious to anyone paying attention: building a product that just wraps a large language model and hands it back to users is not a business. It's a feature. And features get absorbed by platforms.

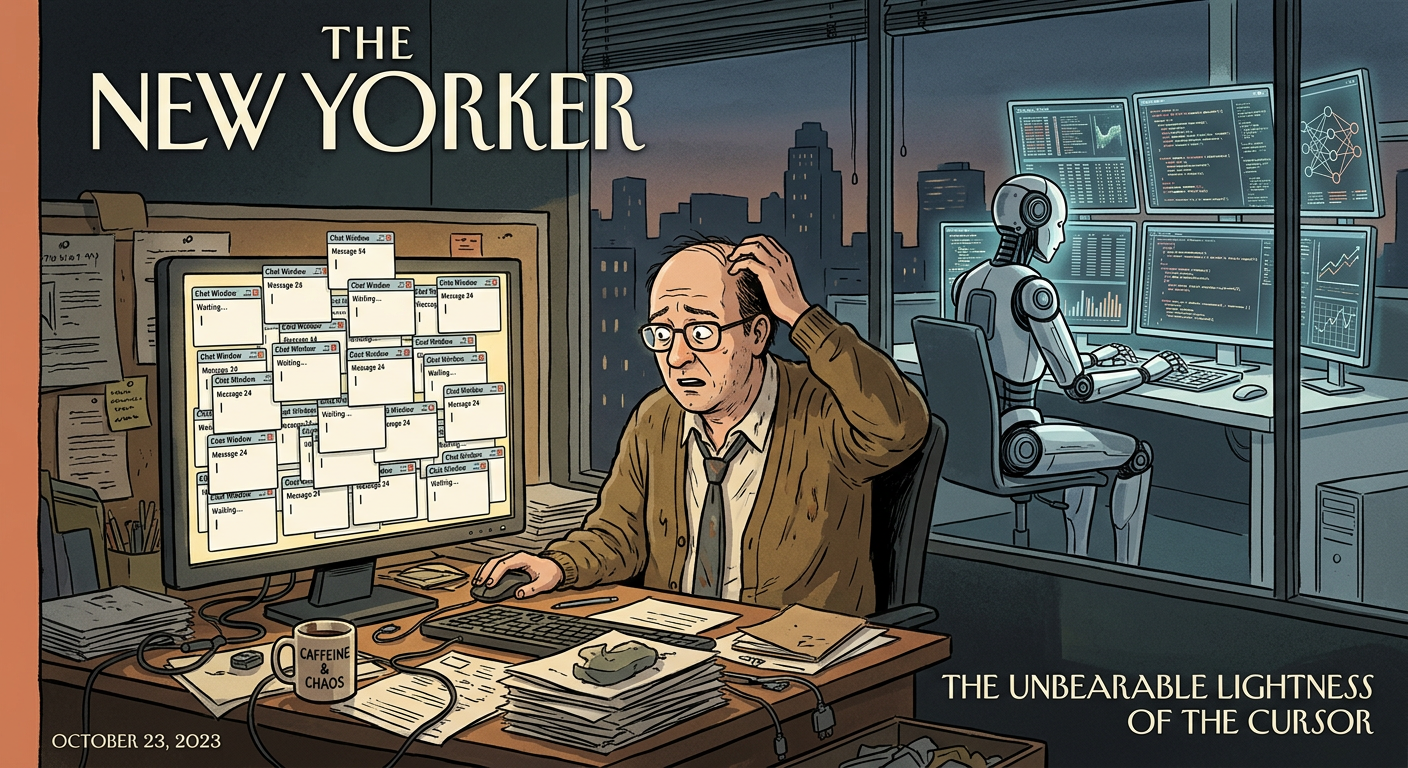

"LLM wrappers are, like, dead," Govind says mid-conversation, with the bluntness of someone who's seen this play out. Morten agrees, adding that there's an over-emphasis on the conversational nature of AI — that the chat window interface we were introduced to is a historical artifact of how AI was developed and tested, not a design decision that serves users.

"I think it's an inheritance from the development environments of AI, where talking to the machine was like the ultimate test," Morten explains. "But you don't really want that. Having all these agents is like having a team of employees. You don't want to be talking to your employee all the time. You want to instruct them, and they should just come back and tell you when it's done."

This insight cuts to the core of where AI product design is heading. The early interface paradigm — query in, answer out, repeat — made sense when language models were novelties.

It makes less sense when the goal is autonomous, continuous intelligence that operates on your behalf while you do other things. The chat window is to AI agents what the command line was to personal computing: foundational, but not the final form.

Act II: Jensen's Robots and the Architecture of What Comes Next

Govind brings up a moment that stopped him cold: Jensen Huang, CEO of NVIDIA, calling Physical AI the most significant technical innovation of our era.

At CES 2026, Huang declared that "the ChatGPT moment for physical AI is here — when machines begin to understand, reason and act in the real world." He wasn't talking about chatbots. He was describing a progression: perception AI, generative AI, agentic AI, physical AI — a march from software understanding the world to software inhabiting it.

"I knew it was gonna end here, and I didn't exactly know how," Govind says. "And then I understood what was going on. It's like, yeah — he's totally right."

What Govind is describing is the inevitable destination of agentic AI: not a chatbot that helps you draft emails, but a containerized, continuously learning entity — running on its own server, accessing its own vector database, operating on its own proprietary knowledge — that can act in the world. A robot. Just digital.

"If you haven't built your first robot from scratch yourself, then you have missed the point of AI completely." — Govind

This isn't just rhetorical flourish. The technical architecture Govind is describing is real and rapidly becoming standard practice. You run a local LLM — or a fine-tuned open-source model — inside a Docker container on a DigitalOcean droplet. That container has access to a vector database (the mechanism by which AI systems store and retrieve information based on semantic similarity, not just keyword matching).

It ingests external signals.

It applies a set of agent specifications.

And it produces outputs — recommendations, drafted messages, decision queues — with escalating autonomy.

Govind admits he's been going down a rabbit hole — vibe coding with Windsurf, wrestling with Ubuntu server configurations at 11pm, deploying React components inside Ghost CMS.

He's not a developer. But he's building. And that's exactly the point: the barrier to building your first autonomous agent has collapsed so fast that the primary limitation is no longer technical. It's imaginative.

The Architecture: Where Intelligence Actually Lives

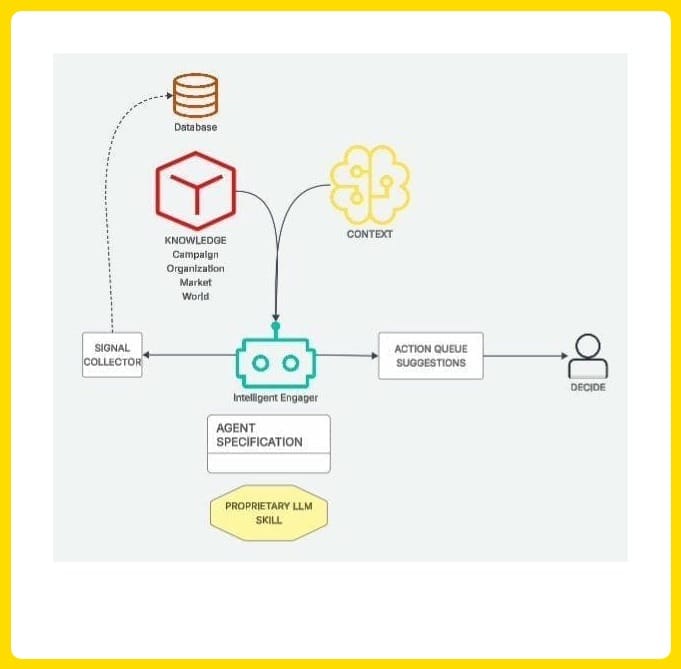

Below is the framework Govind sketched during the conversation — a diagram that captures the essential architecture of an agentic intelligence system for GTM (and, arguably, for any domain where continuous informed decision-making creates value).

The Intelligent Engager Architecture — as sketched during the live conversation. Signal Collector → Knowledge Base + Context → Agent Specification → Action Queue → Human Decision.

The components worth understanding in detail:

Signal Collector: The autonomous process that continuously ingests external signals — LinkedIn posts, news, Forrester reports, competitor moves, anything within a defined domain. Not a one-time query, but a continuous inference loop.

Knowledge Base: Campaign data, organizational context, product information, and world knowledge — vectorized for retrieval. This is where the agent's "memory" lives.

Context: The setting — what the agent is trying to accomplish right now. Who is the audience? What are the current goals? What constraints apply? This is the mission briefing.

Agent Specification: The secret sauce. This is how the agent is instructed to behave — its decision logic, its priorities, its "personality" for the domain. This is where the competitive advantage is encoded, and crucially, where it is hidden. Nobody looking at the outputs can reverse-engineer the specification.

Proprietary LLM Skill: The intelligence layer — ideally not just a pass-through to GPT-4, but a model fine-tuned or trained on domain-specific knowledge that gives it skill, not just information. More on this later.

Action Queue + Human Decision: The final output — not autonomous action, but curated recommendations that a human reviews and approves. The agent suggests; the human decides. Judgment stays on the human side of the table.

Act III: The Eternal Sales Problem — and Why It's the Right One to Solve

Morten asks a question that sounds simple but is actually a strategic acid test: "Are you solving for a fundamental economic process, or a transient workflow?"

It's a crucial distinction.

Transient workflows — stamping mail, cold-calling from phone books, manually entering leads into a CRM — get automated away. They existed because of constraints that technology eventually dissolves. But fundamental economic processes? Goods and buyers need to find each other. They did in 3000 BCE Mesopotamia. They do today. They will in 2126. The form changes. The function does not.

Govind adds a resonant historical note: even ancient Sumerian clay tablets contained what we'd recognize as a modern cap table — investors, amounts, expected returns. The infrastructure of business relationships is older than the internet by several millennia, and no amount of AI is going to dissolve the fundamental human (and increasingly machine-to-machine) need to discover, trust, and transact.

"Sales, farming, transportation of goods — these are fundamental economic processes that won't go away, no matter how much AI. They will change fundamentally. But it's still the same thing." — Morten Evensen

Morten's insight about the sales funnel is particularly sharp. The funnel — awareness, interest, consideration, decision — is an industrial metaphor. It treats human buying behavior like a distillery: pour in a thousand leads, get ten clients out. The model worked when leads were scarce. But today, you can import millions of prospects from Apollo or LinkedIn Sales Navigator in an afternoon. Scarcity isn't the constraint anymore. Relevance is.

This is where agentic AI changes everything. The old constraint was finding leads. The new challenge is the signal-to-noise problem at scale: of the ten thousand people you could theoretically reach, which three are right now, today, in a state of active need that makes your message land instead of bounce?

Market data backs this up starkly. In 2025, sales teams sent more emails and ran more automated sequences than ever before — and average reply rates fell below 3%, while SDR productivity dropped 22%. More volume didn't produce more signal. It produced more noise. The problem wasn't effort. It was lack of intelligence about when to try.

Act IV: Signals, Intelligence, and the Covert Operations Analogy

Morten's inspiration for the architecture of Brewra comes from an unexpected place: the world of intelligence agencies. Not the Bond film version — the operational one. How does a modern intelligence organization actually function?

It has two arms: an analytical arm that collects, processes, and synthesizes information, and an operative arm that takes action. The analytical arm doesn't act. The operative arm doesn't analyze. The quality of the operations depends entirely on the quality of the intelligence. And the critical activity at the center — the judgment call — stays with a human decision-maker who reviews the intelligence and decides what to do.

Apply this to sales and the architecture snaps into focus. Your agentic team is the analytical arm — continuously scanning for signals: a prospect who matches your ideal customer profile complaining about a problem you solve on LinkedIn; a new Forrester report on a topic directly relevant to your offering; a job posting suggesting a company is about to undergo a transformation you could accelerate. These signals, individually, are noise. In combination, against a well-defined context, they become intelligence.

"AI has changed intelligence gathering completely because all these sources are continuously being plowed through, and still, in the end, a human has to sit down and make a judgment call." — Govind

The key move Brewra makes — and that Govind independently identified in his own architecture — is building a thumbs-up/thumbs-down feedback loop into the system. When the agent surfaces a signal, you tell it whether it was relevant or not. That behavioral data becomes part of the shared context, continuously refining the system's understanding of what good looks like for your specific situation. The system learns your judgment without trying to replace it.

This is the right model. Not because AI can't eventually make these calls — it probably can — but because the value of the human in the loop right now is not computational. It's contextual. There's tacit knowledge in a seasoned salesperson's "gut feeling" that cannot be fully articulated into a prompt, and until it can be learned from feedback data, it shouldn't be delegated away.

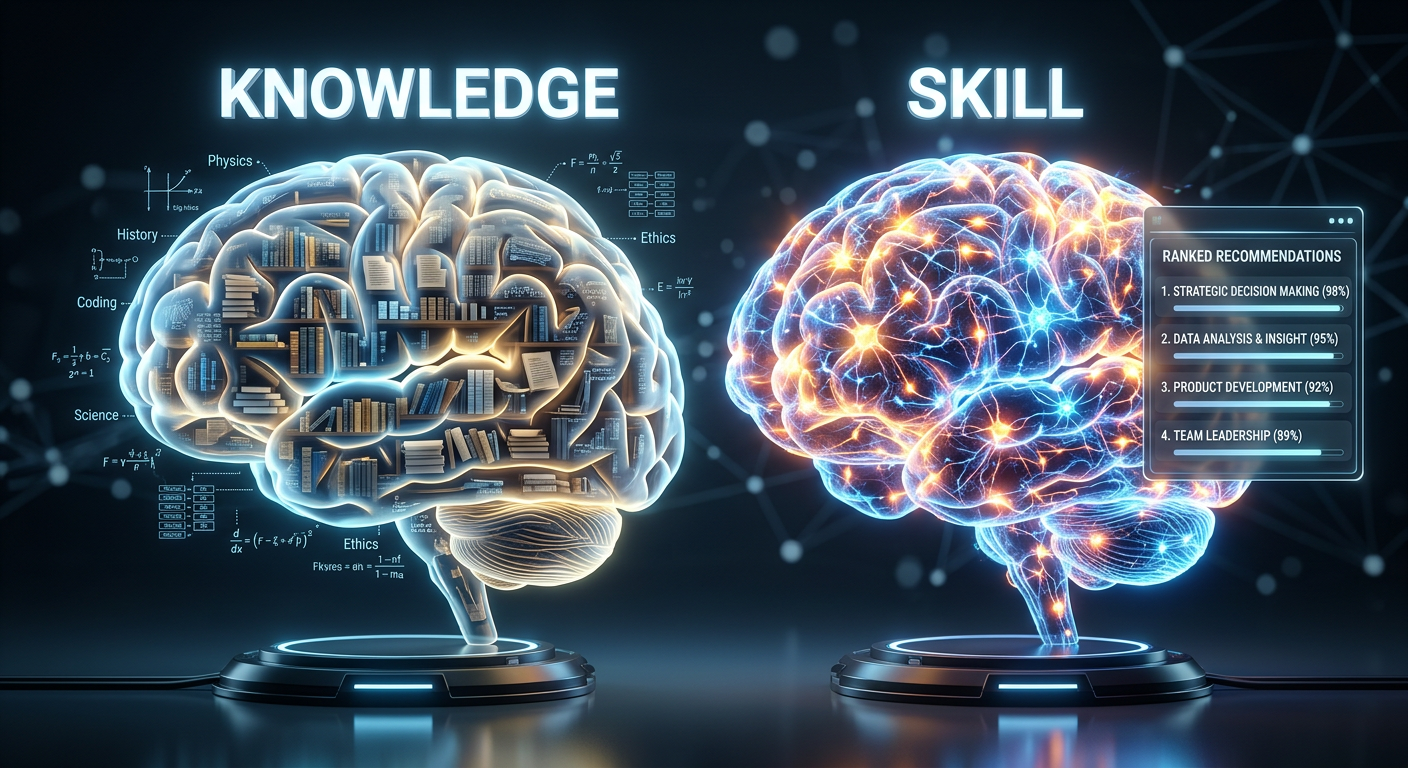

Act V: The Moat is in the Skill, Not the Knowledge

Here's where the conversation gets genuinely technical and, for anyone building in this space, genuinely important.

The inevitable question for any AI product company is: what's your moat? Why can't someone just spin up Claude Code or GPT-4 API and build the same thing in a weekend? It's a fair challenge in a world where the underlying models are increasingly commoditized.

Morten frames the answer in terms of a Venn diagram: your competitive edge lives at the intersection of high-quality public signal intelligence and your proprietary organizational data. The public data is available to everyone; the organizational data is yours alone; but the ability to combine them intelligently, and continuously refine that combination, is the moat.

Govind goes a layer deeper and makes a distinction that matters enormously: it's not just the knowledge that creates the advantage. It's the skill.

"Knowledge is the information you have. You could give somebody all the knowledge in the world, but if they don't have skill in using that knowledge... the proprietary LLM is not a knowledge base. It's the skill." — Govind

In AI terms, this translates to the difference between a retrieval system and a trained reasoning model. A vector database full of your CRM history, sales scripts, and product documentation is a knowledge base. Useful, but reproducible — anyone can build one. But a model fine-tuned on years of winning and losing sales conversations, trained to recognize the behavioral patterns that predict a real buying signal versus a polite brush-off, tuned to produce recommendations that are right for your specific domain and context? That's a skill. And it compounds.

This is why Govind invokes the idea of a "million private LLMs" — the prediction, attributed to an AI researcher during the conversation, that the future of AI infrastructure will be an explosion of highly specialized, privately trained models. Not everyone connects to the same Gemini Ultra endpoint. Everyone runs their own fine-tuned model that understands their domain better than any general-purpose system ever could, at a fraction of the token cost.

And this is where open source becomes the crucial unlock. Models like Meta's Llama family, Mistral, and an expanding ecosystem of domain-specialized open weights make it possible for a small team to train and deploy a private model without the economics of using frontier APIs at scale.

The cost argument isn't philosophical — it's existential.

If your autonomous agent is calling GPT-4 every time it processes a signal, you're not building a business; you're renting one.

Act VI: The Equilibrium Always Resets

Near the end of the conversation, Govind introduces an observation about competitive advantage that has echoed through every era of technological disruption: first-movers get an unfair advantage, then everyone catches up, then the advantage vanishes and you have a new normal.

The Romans had the ballista. For a while, it was decisive. Then everyone had one, and you needed the next thing. The automobile gave early adopters a logistics edge. Then everyone had cars, and the transportation industry was just a bigger, faster version of what it had always been — not a fundamentally different competitive landscape.

Morten's counter is worth sitting with: "When everyone had cars, was the transportation industry more productive or less productive than before?" The answer is obviously more. Technology changes the equilibrium, but it raises the floor for everyone. What was once a competitive advantage becomes a cost of doing business. The software developers don't disappear because AI can write code — they become responsible for more output with the same headcount. The sales development representative doesn't disappear because an agent can research signals — they close more deals because their research is better than any human team could produce alone.

The insight for builders is this: the window to build a proprietary advantage is now. In two years, everyone will have an agentic research team. The question is whether the one you've built, with its trained model and accumulated behavioral context and refined agent specification, is better than the one someone spun up in a weekend from a template.

That gap — between compounding, domain-specific intelligence and generic automation — is where durable businesses get built.

What to Build, and How to Think About It

If you're taking notes, here's the distilled framework from this conversation:

1. Ask the acid test question first. Are you solving for a fundamental economic process (selling, transporting, healthcare) or a transient workflow? If it's transient, you're building a feature, not a company.

2. Don't feed the horse petrol. Don't inject AI into an existing process and call it transformation. Design the process around what AI is actually good at — continuous inference, pattern recognition, tireless research — and leave judgment, creativity, and relationship formation where it belongs: with humans.

3. Build the robot, not the interface. Your competitive asset is not a chat window. It's a containerized, continuously learning agent with proprietary knowledge, a trained skill model, and a feedback loop that compounds over time.

4. The moat is the skill. Fine-tune your own model. Build your own vector knowledge base. Encode your domain expertise into an agent specification nobody can read from the outside. The knowledge is table stakes. The skill is the advantage.

5. Keep the human in the judgment seat. Not because AI can't eventually make those calls, but because behavioral feedback from human judgment is the training data that makes your system better than everyone else's.

There's a version of the AI revolution that's just faster email. And there's a version that's something genuinely new: distributed, domain-specific, continuously learning intelligence that amplifies human judgment rather than replacing it. One of those versions matters. One of those versions creates value that compounds.

Govind and Morten, in their different ways, are both building toward the second version.

Brewra is approaching it from the intelligence-gathering side — building a shared context layer and signal engine for sales teams that want a research department they couldn't otherwise afford. Govind is approaching it from the architecture side — figuring out how non-technical founders can deploy and own their own autonomous systems before the window closes.

The horse doesn't run on petrol. It never did.

But if you design the right vehicle, it turns out the road ahead is much longer — and much more interesting — than anything the horse could have managed anyway.

This article was produced from a live conversation recorded on March 11, 2026.

Transcript processed and article drafted with the assistance of Claude (Anthropic).